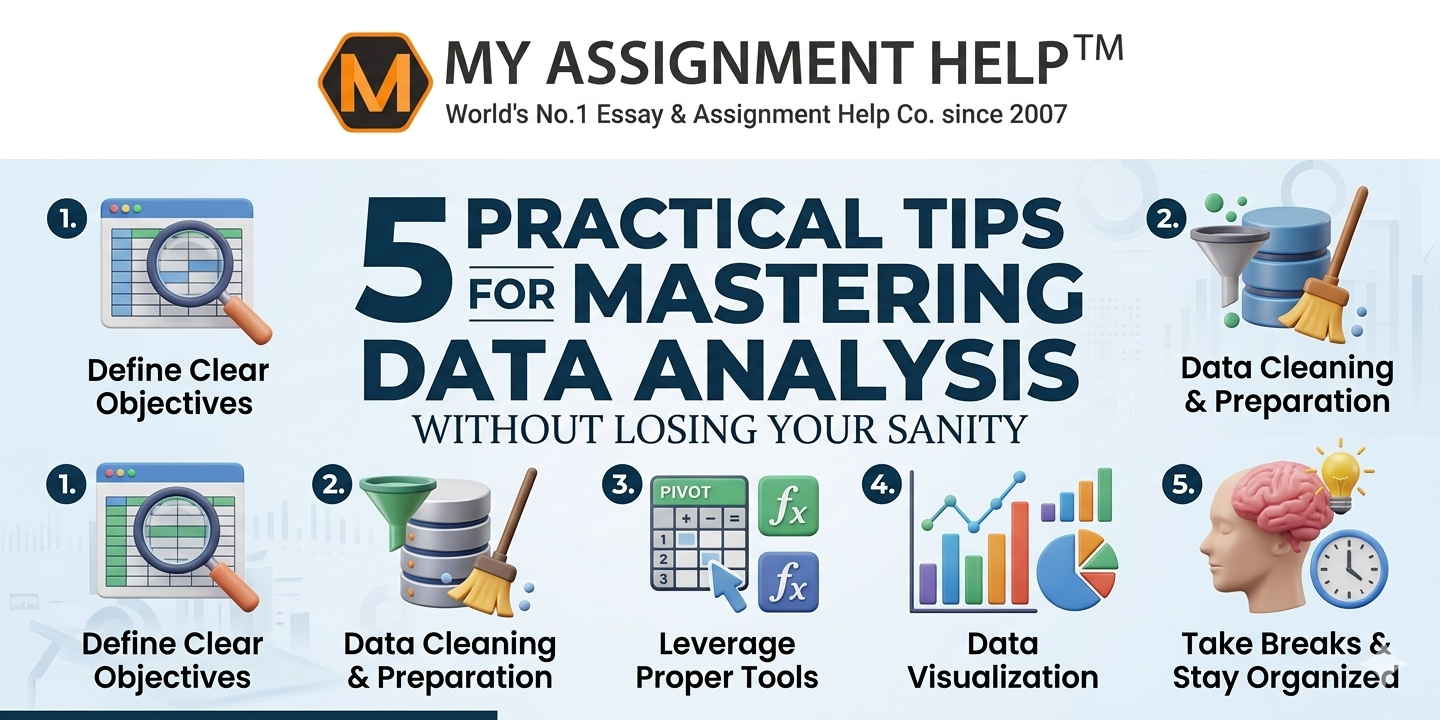

In the modern digital landscape, the ability to interpret numbers isn’t just a technical skill—it’s a critical survival mechanism. Whether you are a postgraduate researcher in London or a budding business analyst in Manchester, the sheer volume of information generated every second can be paralysing. Data analysis often feels like trying to drink from a high-pressure firehose; without a structured, disciplined approach, the risk of “analysis paralysis,” significant errors, or total mental burnout is exceptionally high.

Mastering this field requires far more than just technical fluency in Python, R, or SQL. It requires a sophisticated, strategic mindset that balances rigorous technical standards with personal mental well-being. If you find yourself drowning in endless spreadsheets and conflicting variables, seeking professional statistics assignment help can provide the necessary clarity to navigate complex datasets while maintaining the high academic and professional standards expected in the UK.

1. The “Cleanliness is Godliness” Rule: Preparation is 80% of Success

The most common mistake beginners and even intermediate analysts make is rushing into the “exciting” parts of the job—visualisation and predictive modelling—before the data is actually ready for use. According to a landmark report by Forbes, data scientists spend approximately 80% of their time simply cleaning, munging, and organising data. This isn’t “busy work”; it is the foundation of truth.

Practical Application for Your Workflow:

- Handle Missing Values Strategically: Do not simply delete rows with missing data. Decide early whether to use mean/median imputation, forward-filling, or if the absence of data is an insight in itself.

- Standardise Your Formats: Inconsistent data (e.g., mixing “DD/MM/YYYY” with “MM/DD/YYYY” or blending GBP with USD without conversion) is a silent killer of accuracy.

- Audit for Duplicates: A single redundant entry can artificially inflate your sample size and skew a correlation, leading to false positives that are difficult to track down later.

By front-loading the “boring” work, you ensure that your final insights are built on a bedrock of integrity rather than a house of cards.

2. Define the “Business Question” Before Touching Your Tools

Data is infinite, but your cognitive bandwidth is not. One of the quickest ways to lose your sanity is to enter a dataset without a map. Before opening Excel, Tableau, or a Jupyter Notebook, you must write down exactly what you are trying to prove, disprove, or discover. Without a specific hypothesis, you will likely fall into the trap of “Data Dredging”—the act of searching for patterns until you find something that looks significant but is actually a statistical fluke.

In the UK, the Office for National Statistics (ONS) emphasizes “User-Centred Data,” where every piece of analysis must serve a functional, real-world purpose. When the technical demands of a project grow too heavy and deadlines are looming, it is often more efficient to pay to do my assignment to ensure a high-distinction result. This allows you to step back and focus on the broader conceptual framework and the “so what?” of your research, rather than getting bogged down in the minutiae of syntax errors.

3. Embrace the Power of Exploratory Data Analysis (EDA)

EDA is the process of “getting to know” your data through summaries and charts before performing formal, “high-stakes” modelling. Think of it as a first date with your dataset. It helps you spot outliers that could ruin your mean or identify non-linear relationships that a standard linear regression might completely miss.

Essential Tools for Sanity-Preserving EDA:

- Box Plots: These are indispensable for spotting outliers and understanding the “spread” of your data across different categories.

- Histograms: Best for understanding the distribution—is your data “Normal” (Bell Curve), or is it skewed to the left or right? This determines which statistical tests are valid.

- Correlation Matrices: A quick heatmap can show you which variables are moving together, helping you narrow your focus to the relationships that actually matter.

4. Implement the “Pomodoro” Technique for Coding and Debugging

Data analysis requires deep, prolonged cognitive focus. Most instances of “sanity loss” occur during the debugging phase—when a single misplaced comma or a bracket in your code breaks an entire script. The human brain is not biologically wired to stare at lines of code for six consecutive hours. After hour three, your ability to spot errors drops by more than 50%.

The “Sane Analyst” Strategy:

Utilise 25-minute focused “sprints” followed by a 5-minute total disconnect (no screens). After four such cycles, take a 30-minute break away from your desk. This prevents “code blindness,” a state where you stop seeing obvious errors because your brain is trying to fill in the gaps with what it thinks should be there rather than what is actually on the screen.

5. Document Everything: The “Future Self” Insurance Policy

Mastering data analysis means being able to replicate your results. If you cannot explain exactly how you got from Point A (Raw Data) to Point B (Conclusion) three weeks after finishing the work, the analysis is technically and ethically flawed. In the academic world, reproducibility is the gold standard of E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness).

- Comment Your Code Liberally: Don’t just explain what the code does; explain why you chose that specific threshold or filter.

- Maintain a Change Log: Keep a simple Markdown or Word file noting every major change. If you decide to exclude “outliers above the 99th percentile,” document that decision and the justification behind it.

- Version Control: Use tools like Git or even simple numbered file versions (v1, v2, v3). Never overwrite your raw data file; always work on a copy.

Key Takeaways for High-Performance Analysis

| Step | Action | Strategic Benefit |

| Preparation | Deep Cleaning & Auditing | Prevents the “Garbage In, Garbage Out” (GIGO) effect. |

| Strategy | Hypothesis Definition | Eliminates aimless “data fishing” and saves hours of work. |

| Visualization | Iterative EDA | Catches structural issues before they ruin your final model. |

| Mental Health | Time-Blocked Sprints | Minimises burnout and maintains high accuracy in coding. |

| Integrity | Comprehensive Documentation | Ensures your work is reproducible and academically sound. |

Frequently Asked Questions (FAQ)

Q: Which software is most recommended for UK students?

A: For social and medical sciences, SPSS remains the academic standard. For data science, finance, and engineering, Python (Pandas/NumPy/Scikit-learn) or R are preferred due to their open-source nature and massive library ecosystems.

Q: How do I know if my findings are “statistically significant”?

A: In most UK academic and professional research, a p-value of less than 0.05 is the standard threshold. This suggests there is less than a 5% probability that your results occurred by random chance.

See also: Edge Computing vs Cloud Computing

Q: What is the biggest cause of frustration in data projects?

A: “Dirty” data. Working with uncleaned datasets leads to constant errors, nonsensical visualisations, and inconsistent results, which is why the preparation phase is the most critical for your sanity.

References & Sources

- Office for National Statistics (2024). “Code of Practice for Statistics: Quality, Value, and Trustworthiness.” London, UK.

- University of Oxford (2023). “Best Practices in Research Data Management and Reproducibility.”

- Forbes Technology Council. “The 80/20 Rule of Data Science: Why Cleaning is the Real Work.”

- British Journal of Statistics. “Mental Health and Cognitive Load in Quantitative Research Roles.”

About the Author

Dr. Alistair Graham is a Senior Academic Consultant and Content Strategist at MyAssignmentHelp. With over 12 years of experience in Applied Statistics and Data Strategy, he has mentored thousands of students across the UK in mastering complex quantitative methods. He specialises in making data-driven storytelling accessible, ensuring that technical rigour never comes at the cost of clarity or mental well-being.